#147 - How to Verify AI Citations, Summaries and Statements: The TRACE 5-Check Framework

Today I am giving you the exact framework I now teach every mentee for checking anything that came through an AI tool before it goes anywhere near their thesis or paper.

6 May 2026

Read time: 3 minutes

Supporting our sponsors directly helps me continue delivering valuable content for FREE to you each week. Your clicks make a difference! Thank you. Emmanuel

How to Verify AI Summaries Before You Cite Them

AI summaries flatten scope, miss limitations, and sometimes misstate findings entirely.

Consensus Study Snapshots highlight the exact text inside the PDF — so you verify in 2-3 minutes, not 8.

AI accelerates discovery. Verification is still yours.

👉 Fill in the here.

P.S. I'm live in the comments under every LinkedIn post on Mon, Tue, Thu and Sat from 2:34pm GMT. Drop by if you want to dig into the topic, ask a question, or just say hello.

A couple weeks ago I ran a webinar with over a thousand researchers from more than thirty countries.

We covered a lot of ground, but one concern came up more than anything else.

"How do I know the AI is not misrepresenting the paper it just summarised?"

It is a fair question. And one attendee gave the answer better than I could.

He told the room that an AI tool had misstated the findings of his own published paper.

He only caught it because he was the author.

If he had been anyone else, that wrong summary would have ended up in someone's literature review as fact.

That stuck with me. Because the truth is, most of us would never notice.

We trust the summary, grab the citation, and move on.

And that is where careers start to get damaged.

Today I am giving you the exact framework I now teach every mentee for checking anything that came through an AI tool before it goes anywhere near their thesis or paper.

If you are using AI to help with your literature review and you are not running these five checks, you are building your research on a foundation you have not actually tested.

The Problem Most Researchers Do Not See Coming

Here is what is happening in practice.

- A doctoral researcher uses an AI tool to search for relevant papers.

- The tool returns a list of sources with summaries.

- The summaries look good.

- The citations look real.

The researcher copies them into their literature review, adds a few of their own words around them, and moves on to the next section.

Six months later, their examiner picks up one of those citations and asks what the authors actually argued.

The researcher cannot answer, because they never read the paper.

They read the AI summary of the paper, which is not the same thing.

And here is the part that really matters: AI summaries do not just get things wrong occasionally.

- They get things wrong in a specific way.

- They flatten nuance, drop limitations, overstate findings, and strip out the context that makes a result meaningful.

A paper that found a small effect in a very specific population gets summarised as if it found a large effect that applies to everyone.

The numbers might even be right, but the meaning is completely different.

Your examiner or reviewer will catch this.

Maybe not on the first citation, but across a forty-paper review?

They will notice the pattern. And when they do, it is not just one citation that comes into question. It is all of them.

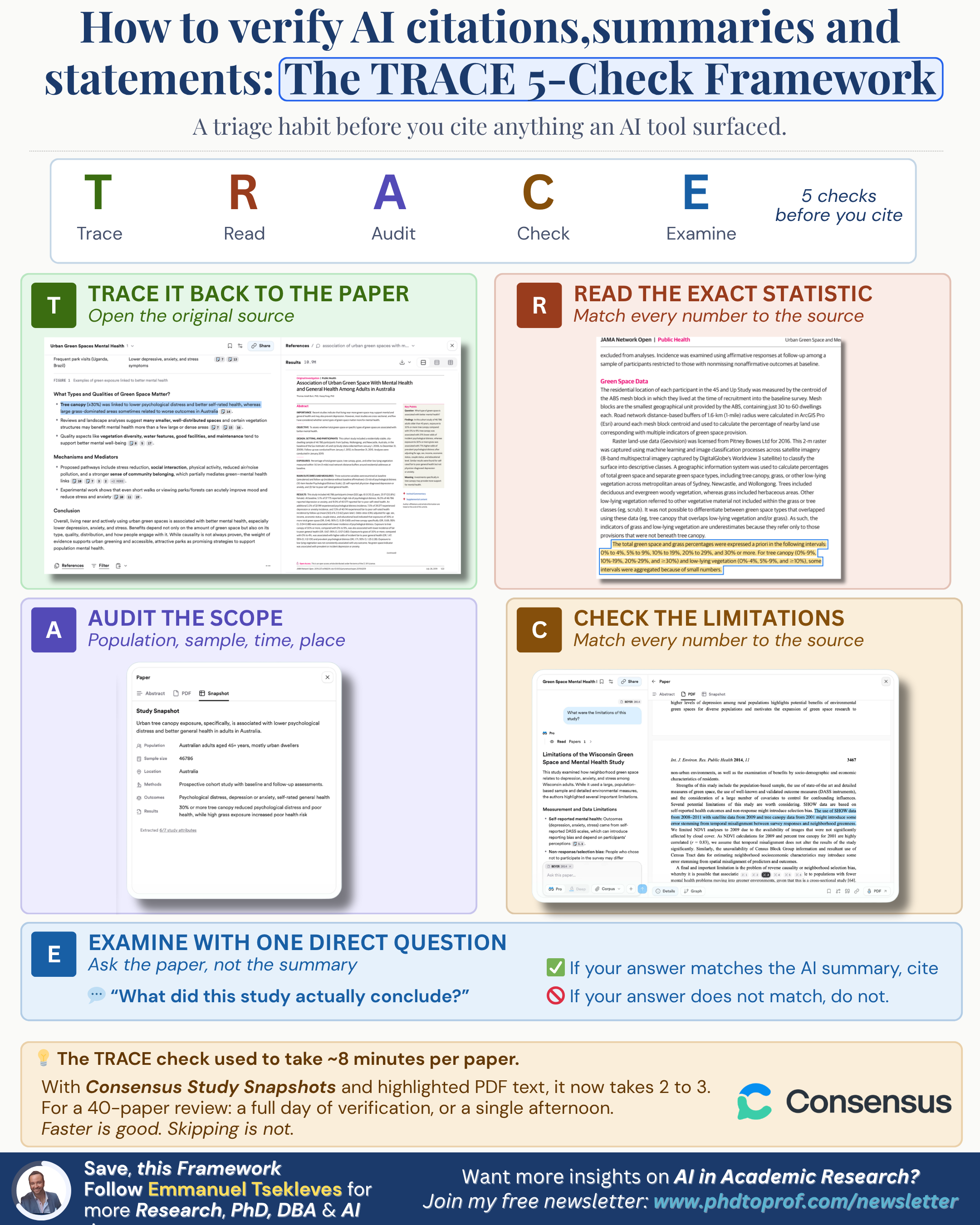

The TRACE Framework: 5 Checks Before You Cite

I put this together after seeing the same mistakes show up across multiple mentees' work.

Five checks, in order, for every single source that came through an AI tool.

I call it TRACE because that is what you are doing: tracing the AI summary back to what the paper actually says.

T - Track Down the Original

Open the actual paper. Not the snapshot. Not the abstract. Not the AI-generated summary card. The full paper.

This sounds obvious, but you would be surprised how often it does not happen.

AI tools make it incredibly easy to grab a citation without ever opening the source, and that convenience is exactly what makes it dangerous.

If you cannot access the full paper, you cannot cite it. Full stop.

A citation you have not read is not a citation. It is a guess with a reference number attached.

R - Read the Real Numbers

If the AI summary says "47% improvement" or "significant positive correlation" or "three themes emerged," go and find that exact claim in the paper.

Page number, section, table, figure. Put your finger on it.

If it is not there, the citation is dead.

- Do not try to find something close enough.

- Do not assume the AI paraphrased it differently.

If the number or finding is not in the paper as the AI described it, that summary is wrong and it cannot go in your work.

This is the check that catches the most problems.

AI tools are surprisingly good at producing numbers that sound right but are not in the source.

Sometimes they pull a number from one section and attach it to a finding from another.

Sometimes they generate a statistic that does not appear anywhere in the paper at all. You will only know if you look.

A - Assess the Scope

AI summaries flatten scope.

They take a study that examined twelve managers in one Danish hospital and present the findings as if they apply to managers everywhere.

The population, the sample size, the country, the time period, the sector, all of the boundaries that make a finding meaningful get quietly dropped.

Your job is to put them back.

When you read the original paper, write down four things:

who was studied, how many, where, and when.

If any of those do not match what the AI summary implied, you need to adjust how you cite that source.

A finding from twelve Danish managers is still valuable.

But it is valuable as evidence from twelve Danish managers, not as a universal truth about management.

C - Check the Limitations

This is where AI is weakest and where your examiner will catch you fastest.

Every good paper has a limitations section.

It tells you what the study did not do, what the authors are not claiming, and where the findings should not be applied.

AI summaries almost never include this information, because they are designed to tell you what a paper found, not what it did not find.

But limitations are exactly what examiners and reviewers look for when they read your literature review.

They want to see that you understood not just what a study concluded, but how far that conclusion actually reaches.

If you cite a paper without knowing its limitations, you are presenting it as stronger evidence than the authors themselves would claim.

And that will come up in your viva.

E - Evaluate with One Direct Question

After you have read the paper, close it and ask yourself one question: what did this study actually conclude?

Say it out loud. In your own words. One sentence.

Now compare that sentence to what the AI summary told you.

If they match, you are safe to cite.

If they do not, something got lost in the summary and you need to go with what the paper actually says, not what the AI told you it says.

This final check takes about thirty seconds, but it is the one that saves you from the most common mistake I see:

researchers who can quote the AI summary of a paper fluently but cannot tell you what the paper actually argued.

Your examiner will know the difference. Every time.

How Long This Actually Takes

When I first started teaching this to mentees, the full TRACE check took about eight minutes per paper.

That felt like a lot, especially for someone working through forty or fifty sources.

But here is what I have found: with the newer AI tools that show you the exact text they pulled from and highlight it inside the PDF itself, the process is now closer to two or three minutes per source.

Tools like Consensus, for example, give you study snapshots that link directly to the specific findings in the paper, so you can run the first two checks almost immediately without scrolling through twenty pages looking for the right paragraph.

For a forty-paper review, that is the difference between a full day of checking and a single afternoon.

Two to three minutes per paper is not a burden.

It is the cost of being able to defend every citation in your thesis.

And when your examiner picks one at random in your viva and asks what the authors actually found, you will be glad you spent those minutes.

When You Do Not Need TRACE

To be clear, this framework is for sources that came to you through an AI tool.

If you found a paper yourself, read it yourself, and wrote your notes yourself, you do not need to run all five checks.

Your normal reading process already covers most of this.

But the moment an AI summary sits between you and the original paper, TRACE kicks in.

That includes AI-generated literature searches, AI summaries of papers, AI-suggested citations, and any tool that gives you a finding without making you read the source first.

Key Takeaways

- AI summaries flatten nuance, drop limitations, and overstate scope. If you cite from a summary without checking the original, you are building on something you have not verified.

- TRACE gives you five checks in order: Track down the original, Read the real numbers, Assess the scope, Check the limitations, Evaluate with one direct question.

- The whole process takes two to three minutes per paper with modern tools. That is a small price for being able to defend every citation in your thesis.

→ Your Action Plan for This Week

- Pick five citations from your current literature review that came through an AI tool and run TRACE on each one.

- For every source, write down the real population, sample size, country, and time period. If any of those are different from what the AI summary implied, fix how you cite it.

- Read the limitations section of each paper and add one sentence to your literature review acknowledging what the study did not cover.

- From now on, do not cite anything from an AI search without opening the original first. No exceptions.

AI speeds up discovery. Verification is still yours.

If you are at proposal stage, I built a free 10-criterion self-assessment based on my experience examining 45+ theses. Takes 12 minutes. You get personalised feedback: phdtoprof.com/scorecard

Need personalised support? Ask about our Premium 1:1 PhD Mentorship Programme and PhD Thesis Review Service.

⭐ BONUS RESOURCE ⭐

Bonus 1: TRACE Verification Checklist

I have turned the TRACE framework into a one-page printable checklist you can keep next to your desk. For each of the five checks, it gives you the question to ask, what to look for, and a tick box to confirm you have done it. There is also space to note the real numbers, scope, and limitations for each source so you have a record when your examiner asks.

📥 Download the TRACE Verification Checklist here.

This is the kind of resource that will be part of our upcoming premium newsletter for subscribers who want deeper tools and practical frameworks.

Bonus 2: AI in Academic Research Webinar Slides.

If you missed the recent webinar with over 1,000 researchers from 30+ countries, you can download the full slide deck here. It covers responsible AI use in doctoral research, the tools that are changing how we search and verify, and the mistakes I am seeing most often as an examiner.

📥 Download the webinar slides here

For now, it is yours at no cost.

Well, that’s it for today.

Until next week,

Prof. Emmanuel Tsekleves

Whenever you're ready, there are 3 ways I can help you:

1. Get free actionable tips on how to complete your PhD on time and use AI responsibly in research by following me on X, LinkedIn, Instagram

2. Join my Premium 1:1 PhD/DBA Mentorship Program. I provide exclusive, results-driven support for professionals who need fast-track guidance on proposals and thesis completion. Visit my website to learn more about this premium consultancy and book a discovery call.

3. Submit your thesis with confidence through my PhD/DBA Thesis Review Service. As an external examiner for 40+ PhDs, I review your work the way examiners do and give you two rounds of detailed feedback. Fill out the discovery form on my website to get started.

Responses